|

Is GPU Passthrough success better with a VM than a LXC when using Linux? (Bonus points: is there a better distro than others?).Is it really this easy now to do GPU passthrough in Proxmox today?.USB, if I don't use it in the VM? Would I be able to use what I don't passthrough in other VMs - either shared resource or passed through - if I left it behind? While I may opt for the audio for sound from the VM in my remote desktop, I'm not sure if I need to do that to get sound, and whether I should skip the other stuff, e.g. 81.00 using example below, and the "All Functions" checkbox in GUI, it brings through separate/distinct audio and other stuff (USB in the case of the GTX1660). Is the more important thing that it's not listed in the "Kernel drive in use" (see below)?Īnother thing I noticed is that when I pass the whole card functionality by simply selecting the root device id, e.g. I thought that meant it wouldn't be included in the kernel because I did: I see it does show the "nouveau" driver in the VM as listed in the kernel modules. Other than avoiding tainting the kernel, what am I missing by doing things this way? Are there some not-obvious impacts or unintended consequences related to what I did, or, actually, didn't do?īelow is the output of lspci -v for my GTX1660 in both Proxmox and the Ubuntu VM. Yes, I did have to start over a couple of times as I tried things out but that's not the purpose of this system. I might think differently if I was constantly building and blowing away VMs using the GPU device in question but that simply is not what I'm doing. I also did it to the minimum that worked - less than what's found in bottom half of the wiki page mentioned above (and elsewhere). I figured since I had to install the drivers in the VM anyway, whether or not I installed them at Proxmox level, why not just do it once and avoid tainting my Proxmox kernel (a message I see in dmesg in my VM)? I'm thinking better the VM kernels end up tainted than the host kernel (or actually, both). Instead of doing the blacklisting and driver installation at the Proxmox level, I did it in the individual VMs.

I found using a VM recommended for GPU Passthrough success - is that true or outdated too? Note that some of you may be stuck back on the fact that I used a VM, not a LXC.

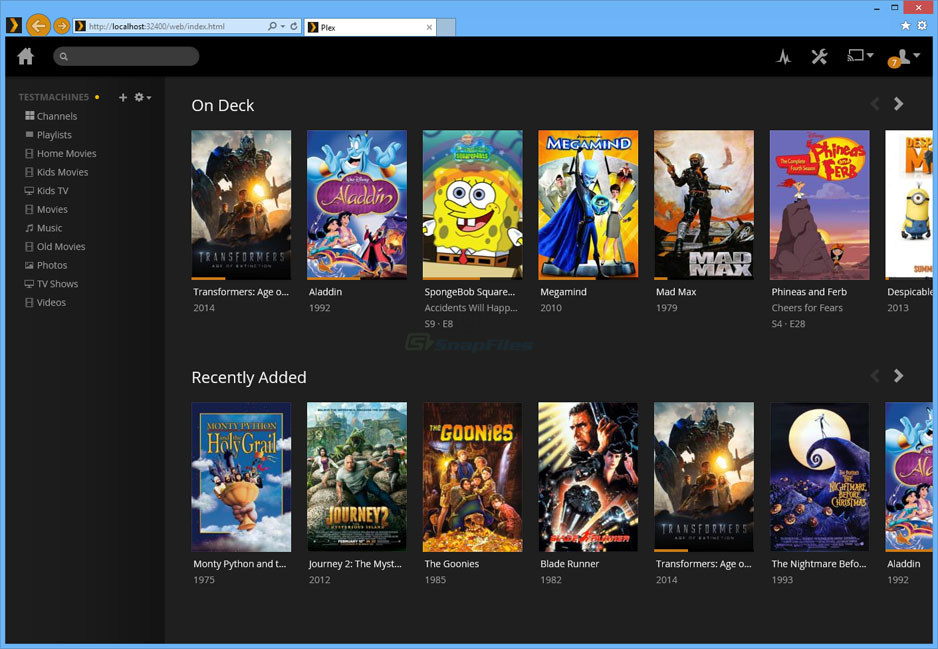

I didn't do anything after section 7, at least not in Proxmox, except confirm the needed VM machine config info, which was still relevant just about everywhere I went. I successfully passed through the RTX3060 to a Windows 10 VM for gaming, and the GTX1660 to a Ubuntu 20.04 VM to make it my new Plex Media Server by following the first 7 sections at. My rig, built on a Supermicro H12SSL-CT motherboard, has 4 GPU's: one on the motherboard and three NVIDIA PCie cards: a GT710, a GTX1660 and a RTX3060. While I can't help but think it's just simpler now than it used to be, I also wonder if I'm missing something given the things I left out. The posts I've found are dated and usually say to do lots of things that I didn't end up needing to do. I have a few questions to help me (maybe others) better understand what needs to be done with respect to GPU passthrough these day (February 2023). After a couple years I threw out ESXi, turned the host into a FreeNAS box with a couple of plug-ins (Unifi Controller, Plex Media Server), and deployed some Intel NUCs as PC's plus one as a "server" for stuff that didn't run (or run well) on FreeNAS. I used to have a VMware ESXi server a few years back that I used for a couple of server workloads and some family VMs accessed through a PC or laptop.

But for anyone in similar situation, it's been very usable, reliable, easy to work with, and pretty easy to get the info I need to resolve most problems. For context, I'm talking about a home lab setting here, and am less than a year into it. First some kudos: I have to say I'm really impressed with Proxox.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed